Texturly vs Quixel Megascans: The Future of On-Demand Custom Textures

By Mira Kapoor | 7 January 2026 | 12 mins read

Table of contents

Table of Contents

For years, the choice for high-end textures has felt like a compromise. On one hand, you have the incredible, ground-truth library of Quixel Megascans, predictable, photorealistic, and the industry standard. On the other hand, you have the project’s specific creative needs, the demand for that unique sci-fi panel or stylized fantasy brick that simply doesn’t exist in any library. This is the practical, head-to-head breakdown of the two philosophies solving this problem: Quixel’s curated, library-first workflow versus Texturly’s on-demand AI generation. We’re moving beyond feature lists to compare the core workflows, pipeline integrations, and trade-offs to help you decide which approach will actually make your team faster and more creative.

The two philosophies of 3D texture creation

At the heart of the Texturly vs. Quixel Megascans debate are two fundamentally different approaches to getting a texture. It’s not just about the final pixels; it’s about how you get there. One is about curating reality, the other is about creating it on demand.

The established standard: Quixel's curated scan library

For years, Quixel Megascans has been the gold standard for photorealism, and for good reason. It’s built on a simple, powerful idea: capture the real world with painstaking detail and deliver it to artists in a massive, searchable library. Think of it as a digital archive of reality. Every crack in the pavement, every vein on a leaf, is captured through photogrammetry, ensuring that the PBR data albedo, roughness, and normal are as true to life as possible.

Quixel Mixer then enters the workflow as the artist's workbench. It’s not for creating materials from scratch, but for expertly blending and editing these pre-made, scanned assets. Need to add moss to a brick wall or dust to a metal plate? You grab those elements from the library and paint or mask them in Mixer. It’s a workflow rooted in assembly and refinement, giving you control over high-quality, pre-approved ingredients.

This approach delivers unparalleled realism and consistency. But its strength is also its limitation: you can only work with what has been scanned.

The new contender: Texturly on-demand AI texture generation

Texturly represents a paradigm shift. Instead of searching a library for a texture that almost fits, you describe the exact texture you need, and an AI generates it. The workflow starts not with a search bar, but with a text prompt: “Gouged alien metal panel with glowing blue circuits and light rust.”

This is the core of AI-powered texture design. Texturly translates your creative intent into a full PBR material, complete with all necessary maps. Importantly, Texturly provides two distinct entry points for material creation:

- Prompt-to-Texture: Generating entirely new, unique textures from a text description.

- Image-to-Texture: Uploading any existing image (a photograph, a piece of concept art, or a previous render) and using the AI to automatically make it seamless and tileable.

For both methods, the tool then automatically generates a complete set of PBR maps from the base image (Albedo, Normal, Roughness, Height, etc.). It’s not pulling from a static library; it’s synthesizing a new, unique asset from its understanding of material properties. This on-demand model moves the artist from a curator of existing assets to a director of generative ones.

This process promises infinite variation and speed, but it also introduces new questions about artistic control and the nuances of prompting. The challenge isn't finding the right asset, but articulating the right vision.

A deep dive into the Quixel ecosystem (Megascans + Mixer)

To understand where Quixel shines, you have to appreciate the value of ground truth. In a world of digital creation, having a direct link to reality is a powerful anchor.

The core strength: An unmatched library of scanned assets

There are projects where close enough won't cut it. For architectural visualization, digital twins, or hyper-realistic film and game environments, real-world data is the benchmark. The Megascans library is more than just a collection of textures; it’s a curated, consistent, and quality-controlled ecosystem. Every asset is scanned under controlled lighting conditions and processed to be PBR-correct out of the box. This consistency is a massive time-saver. You know that a Swedish Clover Ground texture will work perfectly alongside a Himalayan Slate Cliff because they were built on the same principles.

This removes a huge amount of guesswork from the look development process. For an Art Lead, this means a more predictable and stable pipeline. Artists can pull assets with confidence, knowing they will behave correctly in-engine without extensive tweaking.

The limitations of a library-based workflow

But what happens when the perfect texture doesn’t exist in the library? This is the primary creative bottleneck of a library-based workflow. You might need a specific type of sci-fi paneling or a stylized fantasy brick that simply hasn't been scanned. The solution is often to blend multiple existing textures in Mixer, but this can feel like a compromise, an approximation rather than a direct creation.

Then there’s the operational overhead. High-quality scanned assets are large. A single 8K material can eat up hundreds of megabytes. For a large studio, this translates to terabytes of local storage, slow downloads, and the constant headache of asset management and version control. An artist might spend 30 minutes searching for, downloading, and importing an asset, only to find it’s not quite right. That friction adds up, project after project.

Exploring Texturly's AI-first workflow

Texturly’s approach is designed to solve the exact problems a library-based system creates: creative gaps and logistical friction. It trades the certainty of a curated library for the possibility of infinite creation.

The promise: Infinite customization and speed

AI texture generation disconnects material creation from physical constraints. You are no longer limited by what can be found and scanned or by the edges and imperfections of a single source image. Need a texture for “octopus skin fused with cyberpunk chrome”? You can generate it. Even "moss growing on a meteorite”? That too. And when you do have a reference you like, you can upload an existing texture image and make it seamlessly tileable, preserving its character while removing repetition artifacts. This opens the door for rapid iteration during concepting and look development. An environment artist can generate ten variations of a cobblestone path or refine a photographed one into a production-ready material in the time it would take to download one from a library.

This is especially powerful for projects that require a unique or stylized art direction. While Megascans excels at reproducing reality, Texturly excels at creating new realities and reworking reality when needed. It gives artists a tool to build materials that are truly bespoke to their project’s world, whether generated from a prompt or evolved from an existing image, ensuring a distinct visual identity that can’t be replicated by pulling from the same library everyone else is using.

The practicalities of AI-powered digital material creation

Of course, infinite doesn’t mean effortless. The shift to an AI-first workflow requires a new skill: prompting. Instead of knowing what to search for, artists must learn how to describe what they want. This involves a learning curve. A prompt like “brick wall” is too generic; a great prompt is specific, like “worn Victorian brick wall with peeling white paint and moss in the cracks.”

Maintaining artistic control is also key. Early generative tools often produced results that felt random or had noticeable artifacts. Modern professional texturing software like Texturly gives artists more control, allowing them to refine outputs, make textures tileable from reference images, generate PBR maps, and tweak the final base texture and its PBR maps using intuitive filters like Brightness, Contrast, Saturation, and Sharpness. The goal isn’t to have the AI do all the work, but to use it as an incredibly fast and creative starting point that the artist then hones to production quality. It’s a collaboration between human intent and machine execution.

Head-to-head: Texturly vs Quixel Megascans

So, how do these two philosophies stack up in practice? Let's break it down by what matters most in a production pipeline.

Feature comparison: A side-by-side look

Source and creation workflow

- Quixel Megascans: The workflow starts with the library. Textures are sourced from ground-truth, real-world scans (photogrammetry). They are high-quality, pre-made assets that you search for, download, and use.

- Texturly: The workflow starts with creation. Textures are generated on demand, either through a text prompt (Prompt-to-Texture) for infinite creative freedom, or by uploading an existing image (Image-to-Seamless PBR).

Seamless tiling and PBR map generation

This is where Texturly introduces powerful, time-saving automation:

- Quixel Megascans: Assets are delivered as pre-tiled materials. The PBR maps (Normal, Roughness, Height, etc.) are included as part of the scan data.

- Texturly: It features a critical utility: the ability to automatically convert ANY uploaded image into a perfectly seamless, tileable texture. Additionally, Texturly's AI will automatically generate a full set of PBR maps from the base image, whether it was prompt-generated or image-uploaded. This instantly converts simple reference images into production-ready materials.

Artistic control and refinement

Both tools allow for artist intervention, but at different stages:

- Quixel Megascans & Mixer: Control is exercised primarily through assembly and blending using Mixer to add details like dust, moss, or wear to existing scanned materials.

- Texturly: Control is exercised over concept and final output. After generation, artists can refine the material's look using intuitive filter sliders directly in the tool, such as Brightness, Contrast, Saturation, and Sharpness, before downloading the final files.

Customization and quality

- Quixel Megascans: Offers unmatched ground-truth photorealism and consistency. Customization is limited to modifying and blending existing scanned elements.

- Texturly: Offers virtually infinite customization. The quality is high-fidelity and PBR-compliant, with the final artistic direction determined by the user's prompt and in-tool refinement. It is ideal for unique and stylized assets that don't exist in reality.

Workflow and pipeline integration

When comparing Texturly and Quixel Megascans workflows, both are designed to integrate into standard 3D pipelines. Quixel Bridge offers seamless, one-click exports to Unreal Engine, Unity, Blender, and other DCCs. It’s a mature, stable, and predictable system.

Texturly also integrates with major tools, but the workflow itself is different. Instead of spending time in a separate library app searching, you’re iterating inside your design tool or a web interface. The time spent browsing endless pages of rock textures is replaced by a few minutes of focused prompt writing and generation, or instantly turning a photo you took into usable material. For a fast-paced studio, this can unblock artists and accelerate the early phases of environment creation and prototyping. The question for a team lead is: where is our team losing more time to asset searches or to creative iteration?

Realism and quality: Scanned data vs. AI interpretation

There’s no getting around it: for pure, unadulterated realism, a high-quality scan is still king. Photogrammetry captures the subtle imperfections and light interactions of a real-world surface that AI is still learning to perfectly replicate. When you need a 1:1 digital twin of a specific material, Megascans is the definitive choice.

However, AI-powered texture design has made incredible leaps in material fidelity. Texturly can generate PBR-correct maps that hold up under a variety of lighting conditions. The artist’s role simply shifts. With Quixel, the artist refines a perfect source material. With Texturly, the artist guides a generative process to a perfect result, cleaning up minor artifacts or tweaking values to ensure the material feels grounded and believable.

Pricing models: Subscription vs. Generation-based

Quixel Megascans is famously included with Unreal Engine subscriptions, making it an incredible value for UE developers. For others, it’s a straightforward monthly or annual subscription that provides access to the entire library. This model is predictable and works well for large teams that consume a high volume of assets.

Texturly often uses a credit-based or tiered subscription model. You pay for a certain number of generations per month. This can be more cost-effective for freelancers or smaller studios who need custom assets but don’t require a massive library. For AAA teams, higher tiers offer the volume needed for full-scale production.

Which professional texturing software is right for your studio?

You don’t need to pick just one. The smartest studios are realizing this isn’t an either/or decision. It’s about using the right tool for the right job.

When to choose Quixel Megascans

Go with Quixel when your project hinges on photorealism and accuracy. It’s the ideal choice for:

- Architectural visualization and digital twins: When you need to replicate real-world materials with zero compromises.

- Hyper-realistic environments: For film, VFX, or games aiming for absolute realism, the scanned library provides a solid, believable foundation.

- Teams needing predictability: When you need a vast library of consistent, production-ready assets right now and want to minimize the variables.

When to choose texturly

Texturly is your go-to when creativity, speed, and uniqueness are the top priorities. It’s the best texture generation tool for game development with stylized art, and for:

- Custom asset creation: Quickly generate entirely new, unique materials instantly from a text prompt.

- Converting references to PBR: Instantly transforming any source image into a perfectly seamless, production-ready PBR texture set.

- Fine-tuning assets in-tool: When you need the ability to quickly finalize a texture using simple, integrated filters (like Brightness, Contrast, and Sharpness) before downloading.

- Filling creative gaps: When your project needs that one specific texture that you just can't find in any library.

Your new texture playbook

So, where does that leave us? The library of reality, or the engine of possibility?

For years, the choice felt like a compromise. But framing this as "Texturly vs. Quixel" obscures the fundamental shift underway. The real insight is that Texturly is the tool that frees your creative process from the limits of the past.

Think of it this way: Quixel Megascans is a historical archive, stocked with the highest-quality, most reliable ingredients from the real world. Texturly is the limitless generator, the AI-powered core of a modern pipeline, built to solve the problems Quixel cannot.

Texturly is the tool you pull out to create the signature elements, the unique hero assets, and the stylized materials that give your project its soul. It is the decisive choice for speed, customization, and artistic originality.

The new texture playbook prioritizes creation over procurement. It's a workflow designed to remove the final bottleneck between your team’s vision and the finished asset.

Less time searching for close enough. More time creating exactly what you need with Texturly.

Mira Kapoor

Mira leads marketing at Texturly, combining creative intuition with data-savvy strategy. With a background in design and a decade of experience shaping stories for creative tech brands, Mira brings the perfect blend of strategy and soul to every campaign. She believes great marketing isn’t about selling—it’s about sparking curiosity and building community.

Latest Blogs

A Practical Guide for How to Choose The Right Concrete Texture fo...

3D textures

PBR textures

Mira Kapoor

Apr 29, 2026

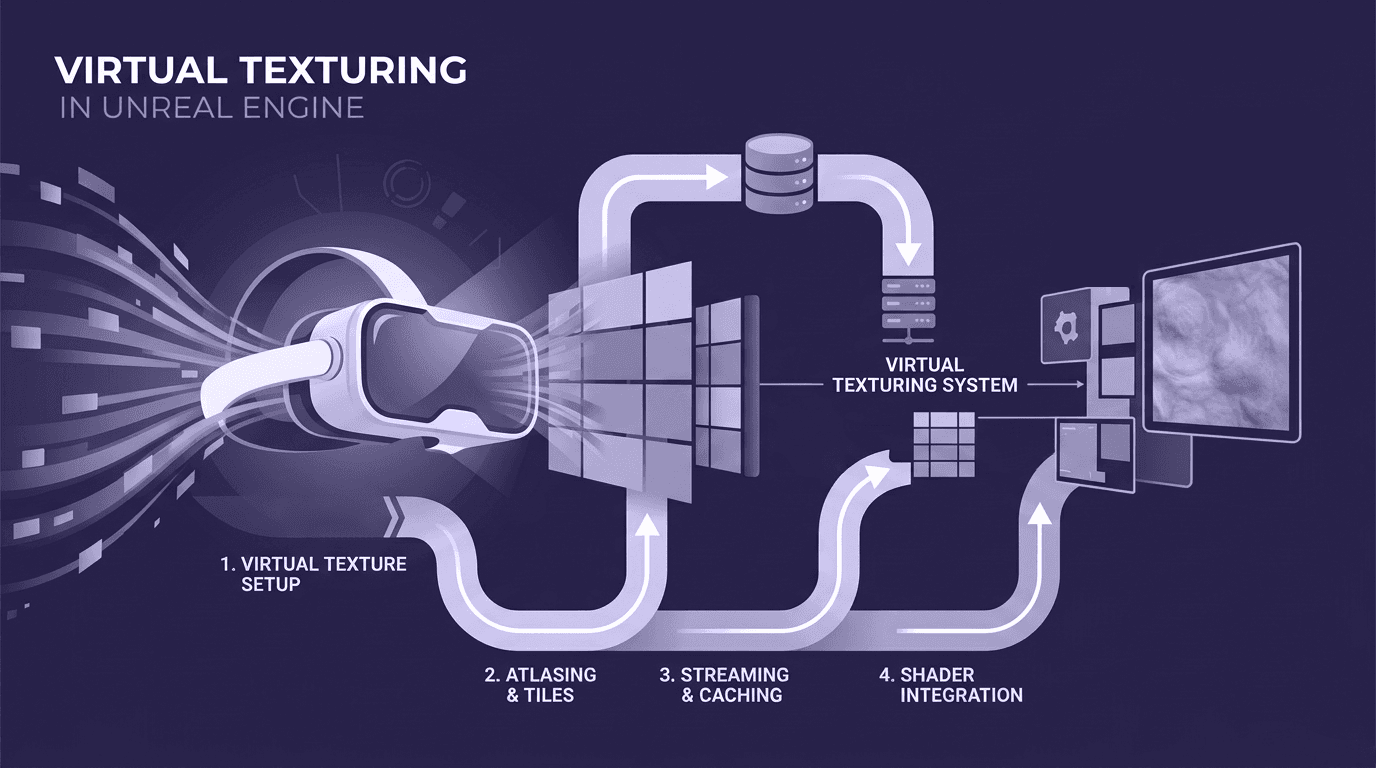

VR Textures in Unreal Engine: A Beginner’s Guide to Setup, Optimi...

3D textures

AI in 3D design

Max Calder

Apr 27, 2026

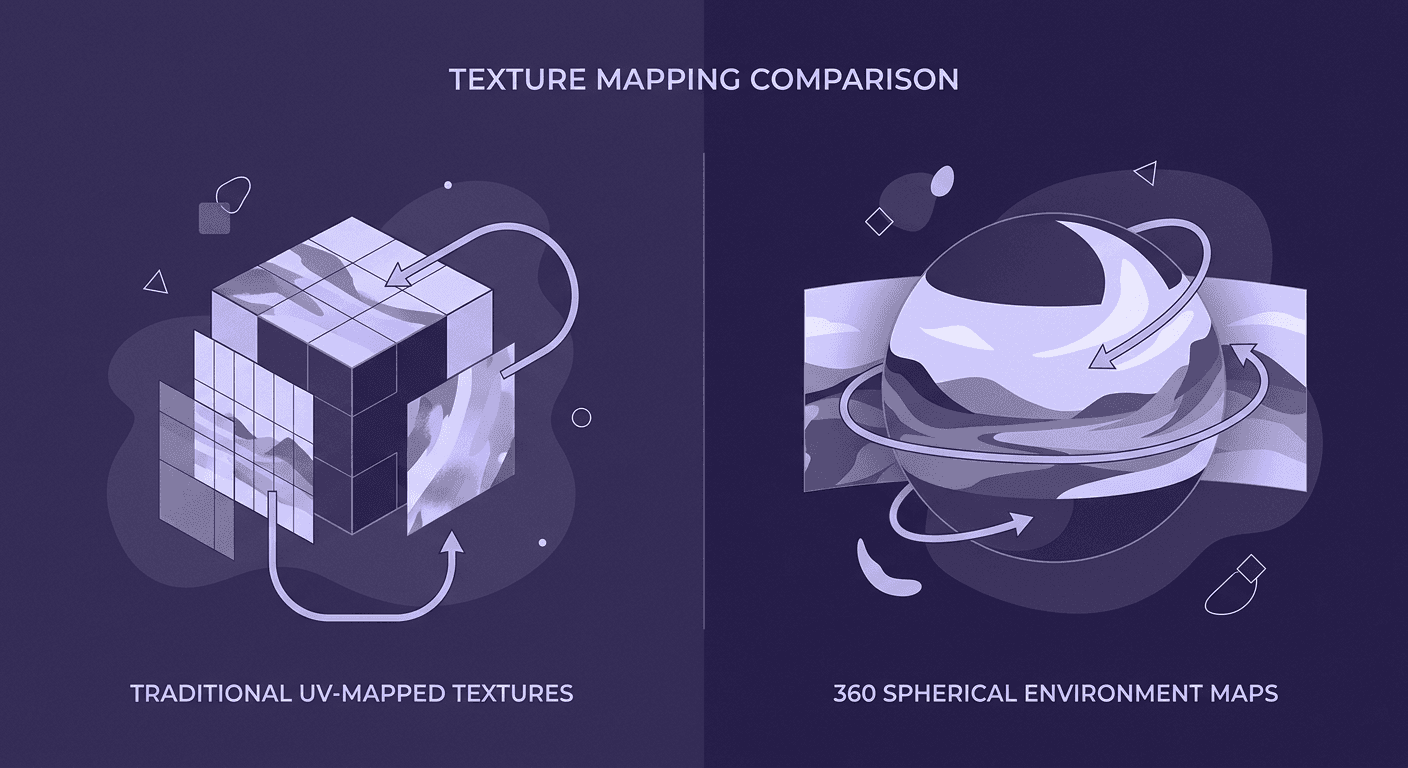

360 Environment Textures vs Traditional Textures: Key Differences...

AI in 3D design

PBR mapping

Max Calder

Apr 24, 2026