Your Skybox Is Wearing Thin: How 360 Textures Became the Engine

By Max Calder | 28 November 2025 | 14 mins read

Table of contents

Table of Contents

For years, the skybox was the best trick we had, a simple texture that gave us epic sunsets and alien skies on a budget. But in the era of VR and fully dynamic worlds, that old trick is wearing thin. Players can feel it when a stunning vista doesn't move right, when the world feels flat and disconnected from its own sky. We’re going to unpack how 360 environment textures are evolving from simple backdrops into the very heart of next-gen rendering, powering everything from believable VR presence to dynamic global illumination and real-time ray tracing. This isn't just about making prettier skies; it's a fundamental shift in how we build worlds that feel cohesive, alive, and truly immersive.

Beyond the skybox: The new demands on 360 environment textures in gaming

The shift to living, reactive game worlds has raised the bar for what a background is allowed to be. Modern engines need skies that don’t just sit there; they must influence lighting, support dynamic atmospheres, and behave like real environments rather than painted scenery. Today’s 360 textures are expected to respond to gameplay, simulate depth, and integrate seamlessly with world systems, forcing a complete rethink of how artists build the visual foundation of a game.

Why traditional environment mapping falls short in next-gen games

The grand illusion is over. The humble skybox, a relic from an era of tightly constrained hardware, no longer meets the expectations of a modern player. It was a masterpiece of efficiency, allowing us to render vast, complex horizons with minimal computational cost. But what was once a clever shortcut is now an artistic and technical compromise. A dynamic world demands an equally dynamic atmosphere, and the fundamental limitations of the simple textured cube have become glaringly apparent in the age of high-fidelity graphics and immersive virtual reality.

Here’s where it breaks down:

- Static lighting: A static skybox means static ambient light. When your game’s sun sets, the sky might turn a gorgeous orange, but the light bouncing onto the environment doesn’t change with it. The illusion shatters. The world feels flat, disconnected, and fake because the background isn't an active participant in the scene's lighting.

- Zero parallax: In the real world, distant objects move more slowly than closer ones as you move. This is parallax, a fundamental depth cue. A traditional skybox has none. Whether you’re running, jumping, or flying, those distant mountains stay perfectly still relative to each other. In a standard game, it's a minor flaw. In VR, it’s a deal-breaker that can literally make players feel sick. Their brains expect depth that isn’t there.

- Interaction is impossible: You can't fly into a skybox. You can’t have volumetric clouds drift in front of a skybox mountain. It’s a wall, and the closer players get to the edge of your world, the more obvious that wall becomes. Open-world games demand seamlessness, and a simple textured cube is the opposite of seamless.

Next-gen immersive game environment design isn't just about higher-poly models; it's about building worlds that feel cohesive and alive. That requires environments that are more than just backdrops. They need to be integrated light sources, have real depth, and react to the player and the world itself. This is the new baseline.

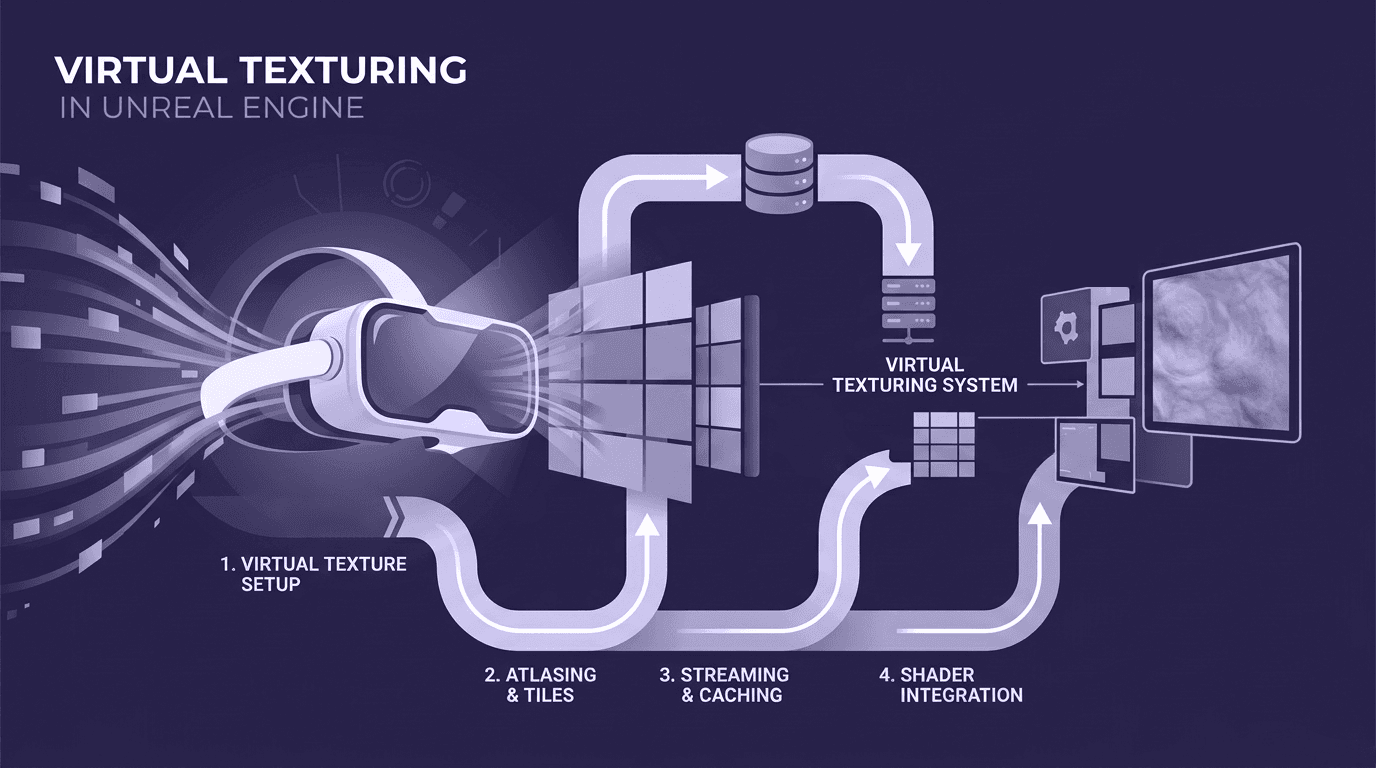

Unpacking the computational challenges of high-resolution 360 textures

So, we need bigger, better, more dynamic 360 environment textures. Easy, right? Just use a 16K HDRi and call it a day. If only.

The computational cost is where the real battle is fought. A single, uncompressed 16K HDR texture can eat up nearly a gigabyte of VRAM. That’s a massive chunk of a console or PC’s memory budget, gone before you’ve even loaded a character model. This isn’t just a storage issue; it’s a performance bottleneck.

- Memory bandwidth: Your GPU is constantly fetching texture data from VRAM. The larger the texture, the more bandwidth it needs. When multiple high-resolution textures are needed for reflections, global illumination, and the visible sky, you can saturate the memory bus. The result? Stuttering, frame drops, and a frustrated player.

- Performance trade-offs: Every gigabyte spent on a sky texture is a gigabyte you can't use for character armor, detailed props, or high-res shadow maps. The art of game development is a balancing act, and blowing your budget on one asset is a recipe for failure.

This is where smart optimization comes in, the stuff that separates amateur work from professional pipelines.

- Mipmapping is non-negotiable. This is the practice of generating a chain of down-sampled, pre-filtered versions of a texture. When an object is far away, the GPU uses a smaller mipmap. When it’s close, it uses a higher-resolution one. This simple trick drastically reduces the required memory bandwidth and prevents aliasing artifacts (shimmering on distant textures). It’s been a staple for years, but with massive 360 textures, it’s a performance lifesaver.

- Streaming is the future. Think of it like Netflix for textures. Instead of loading the entire 1GB texture into VRAM at once, the engine only streams in the parts of the texture (and the required mip level) that are currently visible to the player. This is fundamental to how massive open-world games work. It requires a fast SSD and a sophisticated engine, but it allows for virtually limitless detail without paying the full memory cost upfront.

Getting this right is the key. You want the visual impact of a high-fidelity world without the performance cost, and that means treating your environment textures not as simple assets, but as a data stream to be managed intelligently. From here, we can start layering on more advanced, dynamic techniques.

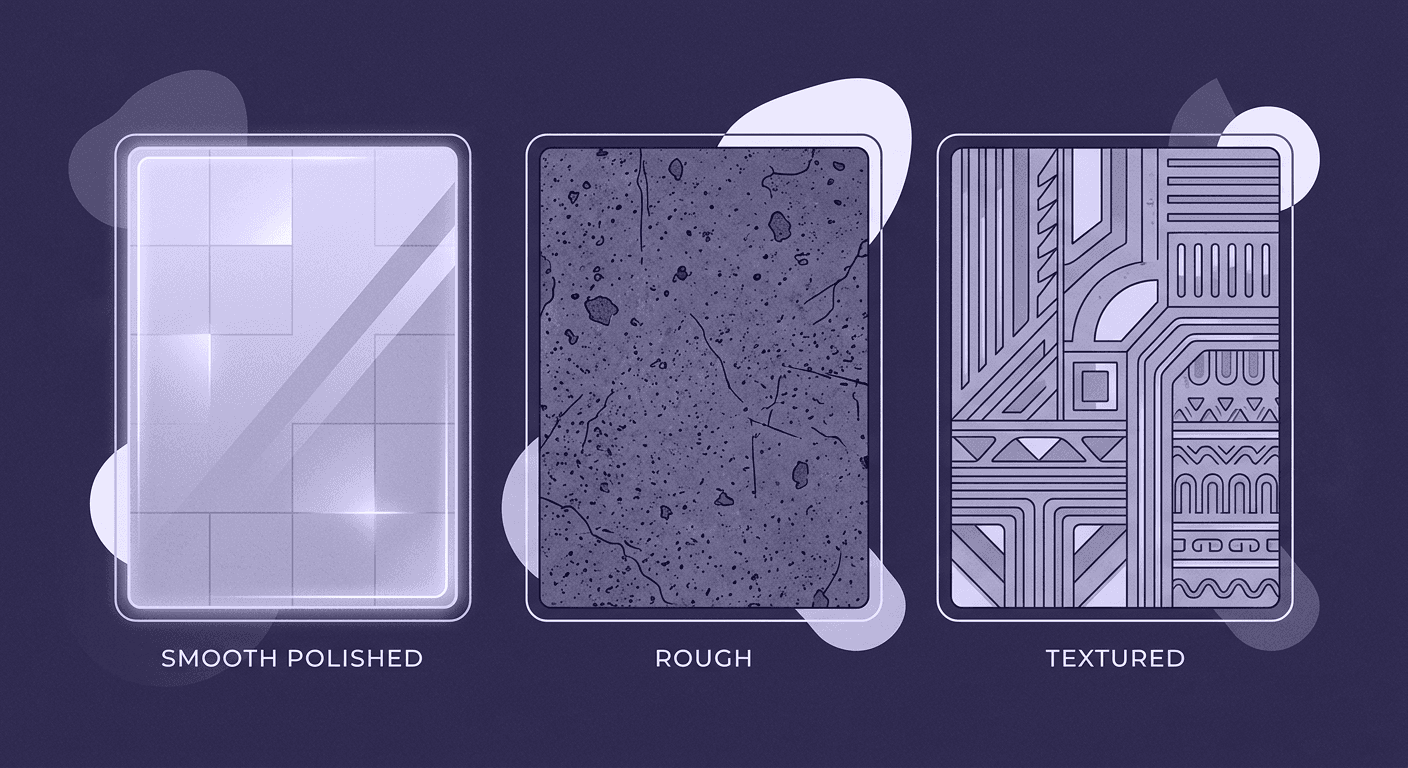

Advanced texture mapping techniques for creating immersive game worlds

As visual expectations climb, studios can no longer rely on single-pass environment maps or static lighting tricks. Advanced mapping techniques now form the backbone of believable worlds, systems that blend, project, and adapt textures in real-time. These methods allow developers to stitch together lighting, reflections, and atmospheric cues in a way that gives every surface a place within the larger environment, anchoring the world with clarity and coherence that players can feel instantly.

Dynamic integration with in-game lighting and weather systems

This is where 360 environment textures stop being just a background and become the heart of your lighting pipeline. Modern renderers treat your sky as the primary light source for the entire scene. We're talking about more than just a simple ambient colour; this is full-blown Image-Based Lighting (IBL).

Here’s how it works in practice: The 360-degree HDR texture provides the colour, intensity, and direction of light for the whole world. If the sky has a soft blue hue on one side and a warm, setting sun on the other, objects in the scene will be lit accordingly. Surfaces facing the blue sky will pick up cool reflections, while surfaces facing the sun will be bathed in warm light.

But the real magic happens when it becomes dynamic. A static HDRi is good, but a system that blends multiple HDRIs based on time-of-day or weather is what creates a truly living world.

Case Study: A dynamic time-of-day cycle

Imagine a game like Ghost of Tsushima. The world transitions from dawn to noon, to sunset, to a moonlit night. This isn't just a simple colour filter. The system is likely blending between multiple 360 environment maps:

- Dawn: An HDRi with a low, intense sun and cool, purple ambient light.

- Midday: A bright, overhead sun with sharp shadows and strong blue skylight.

- Sunset: A low, orange sun casting long shadows and warm, diffuse light.

- Night: A faint moon and starfield providing subtle, cool illumination.

The engine smoothly interpolates between these textures over time. As it does, the global illumination, reflections, and ambient light of the entire world change realistically. This is then combined with local light sources (lamps, fires) and reflection probes, which capture a localized version of the environment to provide more accurate, grounded reflections on nearby objects. It’s this multi-layered approach that sells the illusion of a cohesive, persistent world.

Procedural environment generation and texturing

Okay, but who has the time to create dozens of unique, high-resolution 360-degree textures by hand? For the vast, varied worlds players now expect, manual creation is a bottleneck. This is where proceduralism comes in, not to replace artists, but to amplify their work.

Procedural environment generation uses algorithms to create content based on a set of rules defined by an artist. Instead of painting a sky, an artist might define:

- The type of clouds (cumulus, cirrus, etc.)

- The cloud coverage and density.

- Atmospheric haze and humidity.

- The position and colour of the sun.

From these parameters, tools like Houdini or even in-engine systems can generate a physically accurate and completely dynamic 360-degree sky. This isn't a static texture; it's a simulation. The clouds can move, form, and dissipate. The sun can travel across the sky, and the atmospheric scattering will be calculated correctly for every moment of the day.

This workflow gives artists incredible leverage. They can create infinite variations of a sky that all adhere to a consistent art direction. Need a stormy sky for a specific quest? Tweak the cloud density and darkness parameters. Need a clear desert sky? Reduce the humidity and cloud coverage. It transforms the artist's role from a painter of pixels to a director of atmospheres. This is how you build a world that feels both immense and art-directed, a core challenge in modern game graphics innovation.

Now, let's push this even further and see how these textures are fundamentally changing the player experience in VR and AR.

The VR/AR frontier: How 360 environment textures transform game design

VR and AR push environment textures into a new role entirely: guardians of presence. Here, the sky isn’t a backdrop; it’s a critical piece of sensory alignment that determines whether the world feels stable or unsettling. Delivering the illusion convincingly requires textures that support depth cues, precise lighting, and spatial coherence tailored to motion and head-tracking. In immersive platforms, 360 textures become an essential part of how the player perceives reality itself.

Designing for presence: The unique demands of virtual reality texturing

In traditional gaming, a visual flaw might break immersion. In VR, it can break the player. The feeling of presence, the brain's acceptance of the virtual world as real, is incredibly fragile. And 360 environment textures are on the front line of maintaining that illusion.

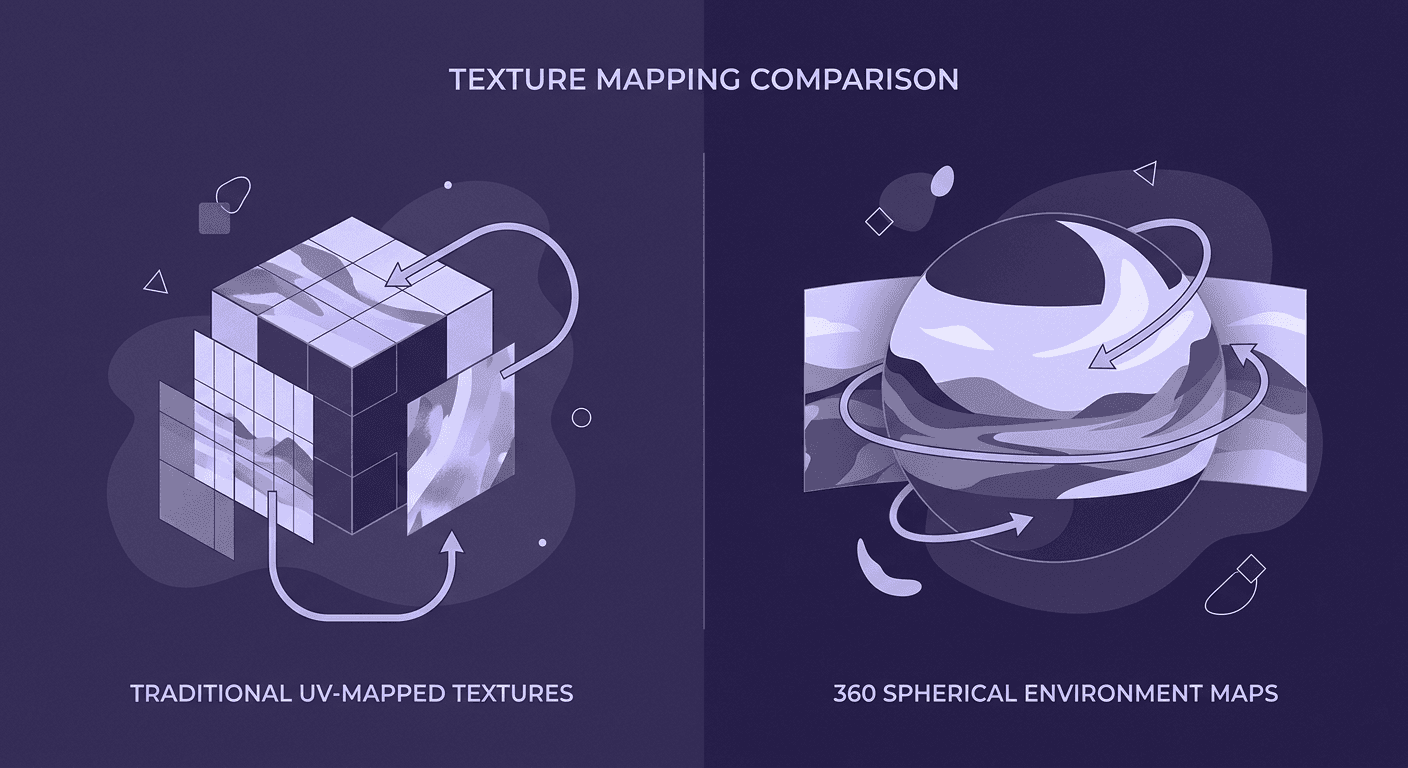

Here’s how 360 environment textures differ in VR versus traditional gaming: it's all about tricking the vestibular system. Your brain uses subtle cues, like parallax and stereoscopic depth, to understand your place in the world. When those cues are wrong, you get motion sickness.

- Stereoscopy magnifies flaws: In VR, two images are rendered, one for each eye. This stereoscopic view gives objects real depth, but it also ruthlessly exposes flat backgrounds. A simple 360-degree image projected onto a sphere looks immediately wrong because it lacks depth. When the player moves their head, the background doesn't shift correctly, creating a sensory mismatch.

- Parallax is paramount: To fix this, developers are turning to more advanced techniques. Instead of a single texture, they might use multi-layered environments or stereo cubemaps, which contain separate textures for the left and right eye. A more advanced method involves generating depth information for the 360-degree texture, allowing the engine to re-project the environment with a convincing parallax effect as the player moves their head slightly. This small movement is the difference between a flat, painted backdrop and a world that feels like it has real distance and scale.

For a VR artist, getting this right is non-negotiable. The goal of virtual reality texturing isn't just to look pretty; it's to create spaces that are believable, comfortable, and stable enough for players to inhabit.

Integrating 360 assets in augmented reality experiences

If VR is about replacing the real world, Augmented Reality (AR) is about enhancing it. Here, the challenge is reversed: how do you make a virtual object look like it truly exists in the player's real-world room? The answer, once again, lies in 360 environment textures.

Most high-end AR applications start by capturing a 360-degree image of the user's surroundings. This isn't shown to the user; it's used as a light source.

- Real-world lighting matching: This 360-degree capture becomes an HDRi that lights the virtual objects. If you're in a room with a bright window on your left and a warm lamp on your right, a virtual car placed on your coffee table will be lit correctly, with a cool highlight on its left side and a warm glow on its right. Its reflections will accurately show the window and the lamp.

- Grounding through reflections: Without this, the virtual object would feel like a sticker pasted onto the screen. With accurate, real-world lighting and reflections, the brain starts to believe the object is physically there. The subtle reflection of your couch on a virtual chrome sphere is an incredibly powerful cue.

The challenge is doing this in real-time, on a mobile device, without draining the battery. It requires highly efficient image capture, processing, and rendering pipelines. But as this technology improves, the line between the real and the virtual will only continue to blur, driven by the clever application of 360-degree textures.

The future of texture rendering in video games

As real-time rendering evolves, the role of 360 textures expands from visual assets to active components of global lighting and simulation. New workflows powered by ray tracing, AI generation, and volumetric systems are redefining what environment rendering even means. These innovations point toward worlds where the sky, atmosphere, and lighting react fluidly to design choices, giving creators unprecedented flexibility and players a deeper sense of immersion than ever before.

The impact of real-time ray tracing technologies

For decades, game graphics have been a masterclass in faking it. Reflections were done with screen-space techniques or baked cubemaps. Global illumination was pre-calculated and stored in lightmaps. Real-time ray tracing is changing all of that, shifting graphics from approximation to simulation.

And at the centre of this shift are 360 environment textures. Here's how they interact:

- Truly dynamic reflections: With ray tracing, a reflective surface like water or metal no longer uses a pre-baked reflection probe. Instead, the engine casts rays from the surface into the scene. When those rays hit the sky, they sample the 360 environment texture directly. This means the reflections are perfectly accurate, in real-time. If clouds move across the sky in your dynamic texture, you will see that movement reflected in every puddle, window, and piece of chrome in your world. There's no faking it.

- Accurate global illumination (GI): Ray tracing allows for true GI, where light bounces around a scene naturally. The 360 environment texture acts as the primary source for this bounced light. Rays are cast out from every surface in the scene to see what light they're receiving from the sky. A patch of ground under a bright blue sky will be lit differently than one under a thick, grey cloud cover. This eliminates the need for baking lighting, allowing for fully dynamic environments where lighting and geometry can change at any time without requiring a lengthy pre-computation process.

This is a fundamental change in how 360 environment textures transform game design. They are no longer just a source for ambient light and a backdrop; they are a critical, active component of a real-time light simulation. The fidelity of your sky now directly impacts the fidelity of your entire scene's lighting.

Game graphics innovation and what’s next for environment design

The pace of change isn't slowing down. As we look ahead, a few key technologies are poised to redefine how we create and use 360 environments.

- AI-powered texture generation: The bottleneck of asset creation is a constant pain point. AI tools are starting to provide a solution. Imagine typing a prompt like "a dramatic, stormy sky at sunset over a cyberpunk city" and getting a high-quality, seamless 360-degree HDRi in seconds. Generative models can also be used for enhancement, upscaling low-resolution textures or outpainting to extend existing environments. This won't replace artists, but it will become an incredibly powerful tool in their arsenal for rapid prototyping and ideation.

- The rise of volumetrics: The next frontier is moving beyond a texture on a distant sphere and into true volumetric environments. Think volumetric clouds that have real depth, that you can fly through, that cast realistic, soft shadows on the ground below. Think dynamic fog and atmospheric haze that are part of the scene's physics, not just a post-process effect. These systems will still be driven and colored by 360-degree textures, but they will add a layer of physical depth and interaction that is currently impossible. This is the future of texture rendering in video games, a seamless blend of textured, procedural, and simulated elements to create worlds that are not just believable, but truly alive.

The sky isn’t the limit, it’s the engine

For years, we treated the sky like a beautiful but static painting on the wall of our world. What we’ve really unpacked here is a fundamental shift in thinking: the 360 environment is no longer just wallpaper. It’s the engine.

It’s the heart of your lighting, the source of your reflections, and the anchor for player presence, especially in VR. The tools we’ve covered, from procedural generation to real-time ray tracing, aren’t just about chasing photorealism. They’re about giving you, the creator, more direct and dynamic control over the atmosphere and emotion of the worlds you build.

The next great leap in immersive design won’t just be about the characters and objects in the scene. It will be defined by the world that surrounds them, powered by a sky that feels just as alive and interactive as everything else. The sky is no longer the limit; it’s the new starting point.

Max Calder

Max Calder is a creative technologist at Texturly. He specializes in material workflows, lighting, and rendering, but what drives him is enhancing creative workflows using technology. Whether he's writing about shader logic or exploring the art behind great textures, Max brings a thoughtful, hands-on perspective shaped by years in the industry. His favorite kind of learning? Collaborative, curious, and always rooted in real-world projects.

Latest Blogs

A Practical Guide for How to Choose The Right Concrete Texture fo...

3D textures

PBR textures

Mira Kapoor

Apr 29, 2026

VR Textures in Unreal Engine: A Beginner’s Guide to Setup, Optimi...

3D textures

AI in 3D design

Max Calder

Apr 27, 2026

360 Environment Textures vs Traditional Textures: Key Differences...

AI in 3D design

PBR mapping

Max Calder

Apr 24, 2026