From Camera Frame to Pro AR Asset: The Complete ARKit Texture Pipeline

By Max Calder | 26 January 2026 | 13 mins read

Table of contents

Table of Contents

You build a great 3D mesh, but when you project the camera texture onto it, something feels… off. The lighting is baked in, the details are blurry, and the whole thing looks less like seamless augmented reality and more like a sticker slapped on the world. This guide is about closing that gap. We're moving beyond the basics to unpack the professional workflow for capturing, mapping, and optimizing high-quality textures that make your AR assets look like they truly belong in the scene, and get your portfolio noticed. From leveraging LiDAR for a perfect canvas to writing custom shaders that react to virtual light, these are the practical, gig-winning skills that separate a junior artist from a seasoned pro.

Capture high-quality source data for your mesh

Everything downstream depends on what you capture upstream. No amount of clever mapping or shader work can rescue a texture that starts with poor source data. This stage is about treating the camera not as a screenshot tool, but as a precision input device. The goal is to capture frames that are sharp, well-lit, and spatially reliable, because the cleaner your source, the more convincing your final AR material will be.

Nail the basics: Accessing the camera frame in ARKit

You need to grab a high-quality snapshot from the live video feed. In ARKit, every ARFrame has a capturedImage property, which gives you the CVPixelBuffer of what the camera sees. Tapping into this is straightforward, but the trick is when to do it.

Most artists make this mistake: they grab a frame without thinking. But the real world is messy, full of motion blur, shifting light, and autofocus adjustments. You’re not just taking a picture; you’re capturing a texture source.

Here’s the pro-tip: Don’t just grab the latest frame. Wait for the right moment. Before you capture, check the ARCamera. TrackingState. If it’s not normal, the system is still figuring things out, and your image quality might suffer. More importantly, you need a moment of stillness. If the user is waving their phone around, you’ll get a blurry mess. The best approach is to capture when the device is relatively stable to avoid motion blur; this single choice dramatically improves your texture clarity.

Level up with LiDAR: How LiDAR sensors enhance texture generation

The camera gives you color, but LiDAR gives you context. For iPhones and iPads with a LiDAR scanner, you get a powerful tool for understanding the geometry of the world. How does this help with texturing? It’s all about accuracy.

When you project a 2D image onto a 3D mesh without depth information, it’s like using a slide projector. The image drapes over everything, causing textures on complex surfaces to look stretched and distorted. You’ve seen it before, a texture that looks fine on a flat wall but turns into a warped mess when it hits the corner of a table.

LiDAR helps solve this by providing precise depth data for every point in the mesh. This allows ARKit’s scene reconstruction to create a much more accurate 3D model in the first place. A better model means better UV coordinates and a texture that maps more cleanly. Think of it like this: the camera provides the paint, but LiDAR builds a better canvas. The result is a texture that feels shrink-wrapped to the surface, not just loosely projected onto it.

Pre-process your captured frames for better results

Once you’ve captured the perfect frame, don’t just slap it onto your mesh. A little pre-processing goes a long way and is a hallmark of professional work. This is where you can correct for the imperfections of the real-world environment.

Using Apple’s Core Image framework, you can perform simple but powerful adjustments on the CVPixelBuffer before you use it for texturing. Is the lighting in the room a bit dim? Tweak the exposure. Are the colors washed out? Increase the saturation. A subtle sharpening filter can also help bring out surface details.

This step doesn’t need to be complicated. A simple pipeline of color and light correction ensures your texture is vibrant and clear. It’s the digital equivalent of cleaning a surface before you paint it, a quick, essential step for a polished finish.

Project and map textures onto your mesh

Once you have a strong source image, the real technical work begins. This is where you translate a flat camera frame into something that actually belongs on a 3D surface. Texture projection isn’t magic; it’s math, coordinate spaces, and careful handling of edge cases. Get this right, and your texture hugs the mesh naturally. This process, known as texture mapping, can feel like a black box of matrix math. But let’s break it down in a way that actually makes sense.

Understand the pipeline: From world space to texture coordinates

At its core, texture mapping is about answering a simple question for every vertex in your 3D mesh: “Which pixel in the 2D camera image should I get my color from?” To do this, you have to translate the 3D position of each vertex into a 2D coordinate on your captured image.

Think of it like creating a custom sewing pattern for your 3D model. Your mesh is the fabric, and the camera image is the printed design you want to apply. The coordinate transformation process is how you figure out how to cut the pattern so the design lines up perfectly on the final 3D shape.

ARKit provides the necessary transformation matrices to do this. You'll take a vertex from its 3D position in the world (world space), transform it into the camera’s perspective (view space), and then project it onto a 2D plane (clip space). The final result is a set of 2D coordinates called UVs that map directly to the pixels in your captured frame.

Generate UV coordinates for your ARMeshGeometry

This is the most technical part, but you’ve got this. The goal is to calculate a UV coordinate (a 2D vector where U and V are between 0.0 and 1.0) for each vertex in your ARMeshGeometry.

The process looks like this:

- Get the vertex: For each vertex in your mesh, you start with its 3D position in model space.

- Transform to world space: Apply the mesh anchor’s transform to get the vertex’s position in the shared world coordinate system.

- Transform to camera space: Use the camera’s view and projection matrices (provided in the ARFrame) to project that 3D world point onto the 2D screen. This is the heavy lifting.

- Normalize the coordinates: The result of the projection will be in clip space (typically -1 to +1). You need to convert this into texture space (0 to 1). This is your final UV coordinate.

One common pitfall here is dealing with vertices that aren't visible to the camera. Some vertices might be behind the camera or outside the frame. You’ll need to handle these cases by clamping your UV coordinates or discarding triangles that are fully outside the viewport to avoid weird texture stretching from off-screen.

Apply the texture: How to apply advanced texture mapping in ARKit

With your UVs generated, the final step is to put it all together. You’ll load your pre-processed camera frame as a texture and use your newly calculated UVs to tell the renderer how to apply it to the mesh.

Here’s a clear, step-by-step guide:

- Create a texture: Convert your CVPixelBuffer (the camera frame) into a texture that your rendering engine, like Metal or SceneKit, can understand. This is usually an MTLTexture.

- Attach UVs to the mesh: Add the list of UV coordinates you generated as an attribute to your mesh geometry. This pairs each vertex with its corresponding texture coordinate.

- Configure the material: Create a material for your mesh. Instead of a simple color, you’ll assign your camera frame texture to its diffuse property.

- Render: The GPU takes over. For each pixel on a mesh triangle, it interpolates the UV coordinates from the vertices and samples the correct color from your texture. The result? A 3D mesh that perfectly reflects the real-world environment it just captured.

Enhance texture quality with advanced techniques

Getting a texture onto a mesh is a huge win. But to really elevate your work and command higher rates, you need to push beyond the basics. These advanced techniques are where you can add a layer of realism and dynamism that sets your portfolio apart.

Write custom shaders for dynamic lighting and effects

Think of shaders as instructions that tell the GPU how to color each pixel. Standard texturing just says, “Look up the color at this UV coordinate.” A custom shader lets you say so much more. This is your key to professional-looking assets.

A common issue with camera-projected textures is that they have real-world lighting and shadows baked right into them. If you drop a virtual light into your AR scene, it won't affect the baked-in lighting, which instantly breaks the illusion.

Here’s a simple but powerful shader idea: create one that corrects for lighting inconsistencies. You can sample the texture color, then use ARKit’s estimated light direction and the mesh’s surface normals to either brighten parts of the texture that are in shadow or darken parts that are directly lit in your virtual scene. This makes the captured texture feel like it truly belongs in your AR experience, reacting to virtual lights as if it were a native 3D asset.

Blend multiple camera frames to create a super-texture

Projecting from a single camera frame is great, but it has limits. Surfaces that are at a steep angle to the camera get stretched, and parts of the mesh occluded from that single viewpoint have no texture data at all.

The solution is to create a super-texture by blending multiple camera frames. This is a major step up. The workflow looks something like this:

- Capture multiple frames: As the user moves around, capture several high-quality frames from different viewpoints.

- Project from each view: For each captured frame, project the mesh vertices to generate a set of UVs, just like before.

- Blend in a shader: In a custom shader, you can now sample from multiple textures. For each pixel you render, you can decide which texture provides the best data. A good way to do this is to calculate the angle between the surface normal and the camera direction for each frame. The frame that was captured most directly facing the surface will have the least distortion, so you give its texture more weight in the final blend.

This technique fills in gaps, increases overall texture resolution, and dramatically reduces stretching artifacts. It’s more complex, but it’s how you build AR experiences that feel truly grounded and realistic.

Optimize your textures for real-time performance

You’ve built a beautiful, realistically textured asset. The client loves it… until they run it on an iPhone 11 and the frame rate tanks. This section is the freelance gig-saver. Performance isn’t a feature; it’s a requirement.

The freelance gig-saver: Techniques for augmented reality texture optimization

Your client needs their app to be snappy and responsive. Large, uncompressed textures are one of the biggest killers of mobile performance because they consume two precious resources: memory bandwidth and storage.

Here are the three core techniques you absolutely must know:

- Texture compression (ASTC): This is non-negotiable for mobile. ASTC is a compression format that dramatically reduces the memory footprint of your textures with minimal loss in visual quality. It's hardware-accelerated on all modern iOS devices. Using an uncompressed texture is like shipping a PNG when a JPG would do, it’s just wasting resources.

- Mipmapping: A mipmap is a pre-calculated, smaller, lower-resolution version of your texture. When a textured surface is far from the camera, the GPU can use a smaller mipmap level instead of the full-resolution texture. This saves memory bandwidth and prevents ugly shimmering artifacts on distant objects. Most engines can generate mipmaps for you with a single checkbox.

- Texture atlases: If your AR scene uses many small objects with different textures, you might be hurting performance. Every material switch is a new instruction for the GPU (a draw call). A texture atlas is a technique where you combine many smaller textures into a single, larger image sheet. This allows you to render multiple objects with a single material, reducing draw calls and improving performance.

Balance visual quality and performance for mobile AR

Optimization is all about making smart trade-offs. Does that background prop really need a 4K texture, or would a compressed 1K texture look just as good while saving megabytes of memory? The key is to find the sweet spot between looking great and running great.

Here’s a quick checklist to guide your decisions:

- Know your target: Are you building for the latest iPhone 15 Pro, or does it need to run on older hardware? Your performance budget changes dramatically.

- Profile your app: Don’t guess where the bottlenecks are. Use Xcode’s tools, like the GPU Frame Debugger, to see exactly how much time is being spent on rendering and where your memory is going. This lets you focus your optimization efforts where they’ll have the most impact.

- Prioritize a stable frame rate: For AR, a smooth, stable frame rate is more important than maximum texture resolution. A dropped frame breaks the illusion of reality far more than a slightly less crisp texture will.

Mastering these optimization techniques shows clients you’re not just an artist, but a professional who understands the technical constraints of the platform. That’s how you build a reputation, get rehired, and land bigger and better gigs.

From sticker to seamless: It’s all in the workflow

We've covered a lot of ground, from grabbing the perfect camera frame to writing shaders that blend textures like a pro. But if you take one thing away from this guide, let it be this:

You’re no longer just slapping a photo onto a mesh. You’re crafting a complete material, one that respects the geometry, reacts to light, and runs smoothly on the devices your clients actually use. That’s the shift in thinking that separates the pros from the rest.

Think about your next project. When you show it to a potential client, you won't just be showing them a 3D model. You'll be showing them an experience that feels grounded and real. You can talk confidently about texture atlases and mipmapping, proving you care about their app’s performance. You can explain how your custom shader works to make the asset feel alive in the scene.

That’s the difference between a junior artist and a go-to professional. It's not about knowing one trick; it's about mastering the entire pipeline. These are the skills that get you noticed, justify higher rates, and turn one-off gigs into lasting client relationships.

You’ve got the roadmap. Now go build something amazing.

Max Calder

Max Calder is a creative technologist at Texturly. He specializes in material workflows, lighting, and rendering, but what drives him is enhancing creative workflows using technology. Whether he's writing about shader logic or exploring the art behind great textures, Max brings a thoughtful, hands-on perspective shaped by years in the industry. His favorite kind of learning? Collaborative, curious, and always rooted in real-world projects.

Latest Blogs

A Practical Guide for How to Choose The Right Concrete Texture fo...

3D textures

PBR textures

Mira Kapoor

Apr 29, 2026

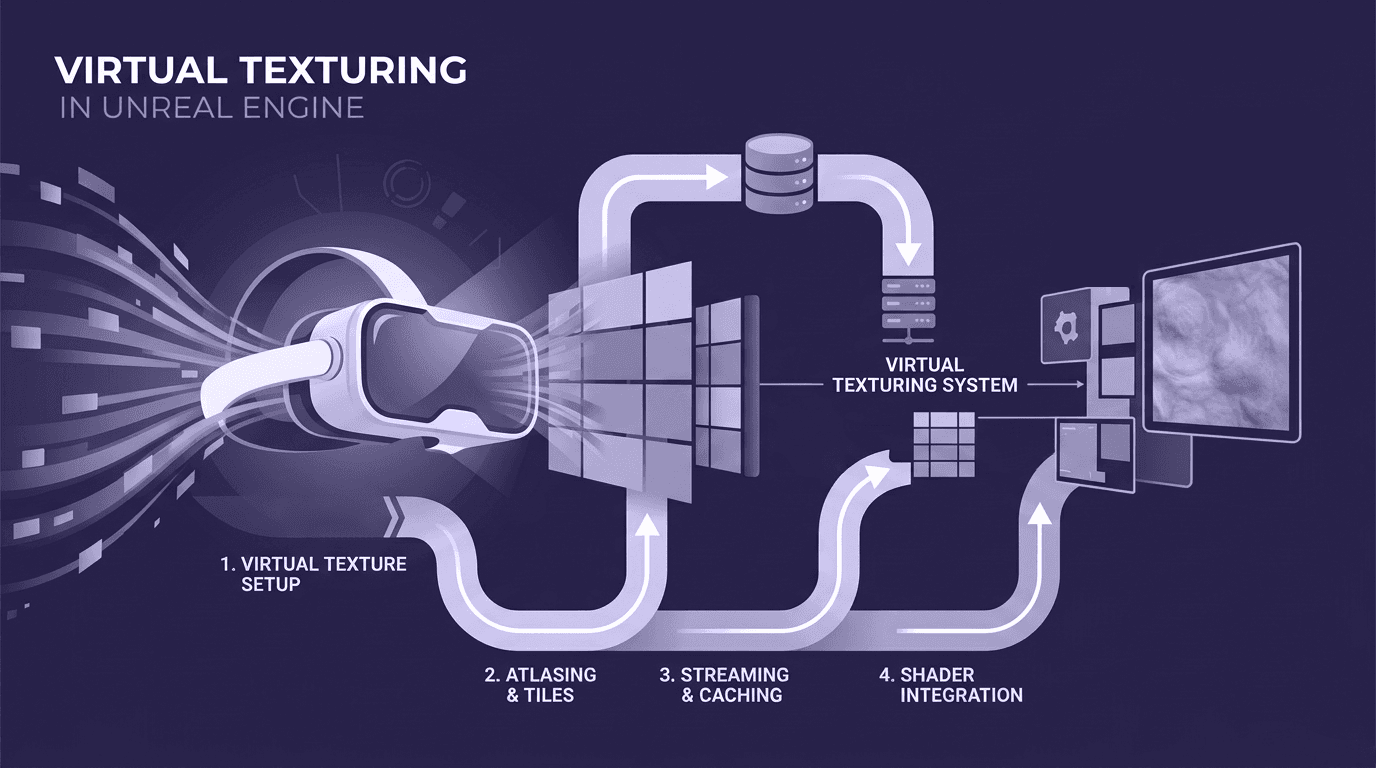

VR Textures in Unreal Engine: A Beginner’s Guide to Setup, Optimi...

3D textures

AI in 3D design

Max Calder

Apr 27, 2026

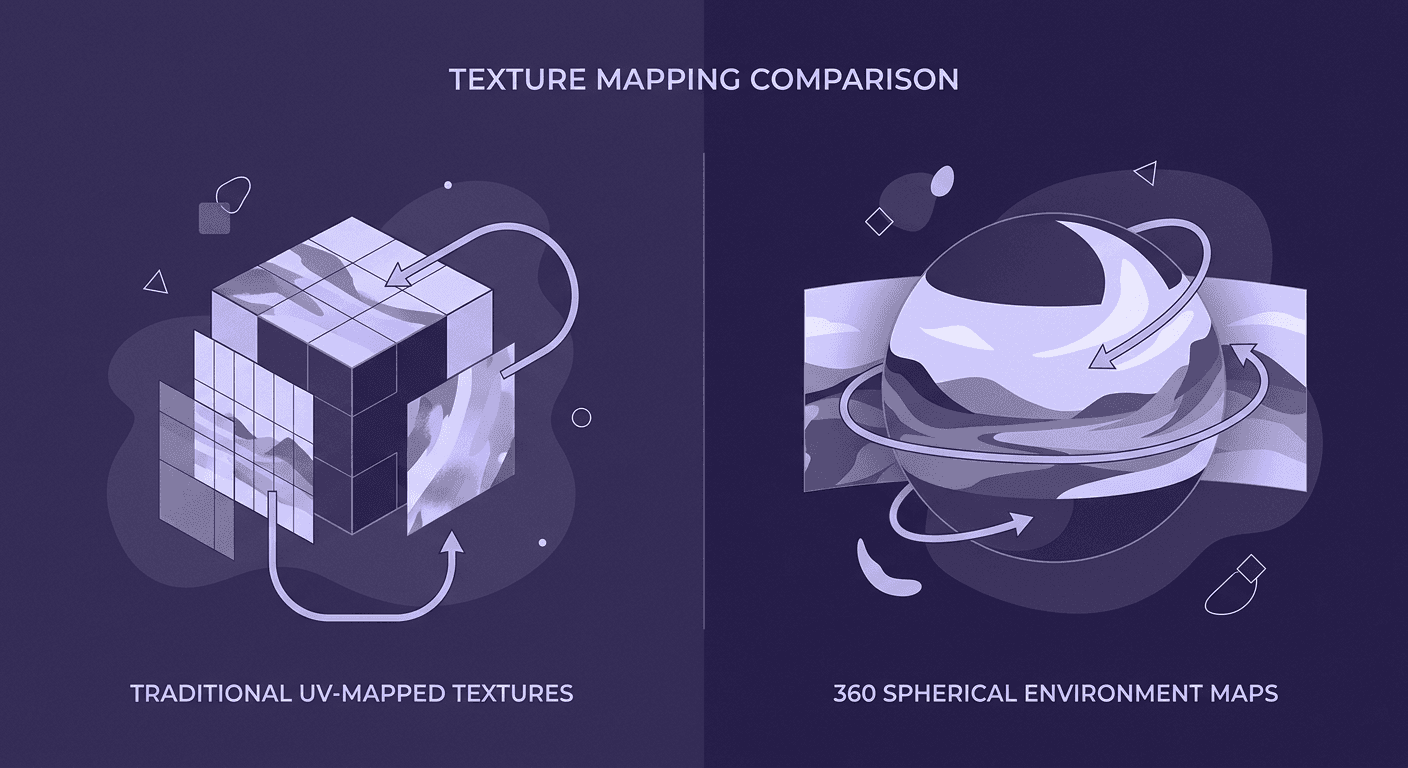

360 Environment Textures vs Traditional Textures: Key Differences...

AI in 3D design

PBR mapping

Max Calder

Apr 24, 2026