Future-Proofing the Metaverse: Emerging Trends in Texturing

By Mira Kapoor | 23 July 2025 | 14 mins read

Table of contents

Table of Contents

Every creative director knows the feeling. You see a stunning new asset from your artist, and your first thought isn’t about its beauty, it’s about the performance budget. It’s that constant tug-of-war between visual fidelity and framerate, and it’s the single biggest bottleneck for building the immersive, scalable worlds the metaverse promises.

This guide is about breaking that cycle for good. We’ll unpack the key texturing trends that are actually moving the industry forward, from AI-powered creation and advanced real-time rendering to materials that respond to the user. This isn’t about chasing buzzwords; it’s a practical look at how you can future-proof your pipeline, ship incredible experiences, and finally win that tug-of-war.

The core challenge: The fidelity vs. framerate tug-of-war

The core tension in any real-time 3D project feels like a tug-of-war where you’re the rope. On one end, you have the demand for breathtaking visual fidelity. On the other hand, a non-negotiable need for a smooth, consistent framerate. Every creative director knows this battle. You see a beautifully sculpted asset from your artist, rich with detail, and you immediately start the mental calculation: What will this cost us in performance?

Why traditional texturing workflows feel like a bottleneck

For years, the workflow has been predictable but punishing. An artist spends days, sometimes weeks, manually creating a suite of PBR textures for a single hero asset. They wrestle with UVs, bake normal maps, and paint imperfections to tell a story. It’s artistry, but it’s also a time-consuming craft that doesn't scale well.

This manual process creates two major constraints:

1. Time and cost: The sheer hours required to texture environments for the metaverse are immense. If every single rock, wall, and prop needs bespoke texturing, the budget and timeline swell beyond feasibility. You’re forced to make compromises, reusing assets aggressively, simplifying geometry, or cutting scope. It feels less like world-building and more like asset management.

2. The performance ceiling: Every high-resolution texture, every complex material, adds to the memory budget and draw call count. The result? You hit a performance wall. To claw back frames, you down-resolution textures until they’re blurry, aggressively merge materials, and bake lighting until your world feels static and lifeless. The very detail you fought for gets sacrificed for performance.

This cycle is the single biggest bottleneck to creating the vast, rich worlds the metaverse promises. We’re using workflows designed for contained, linear games to build persistent, ever-expanding universes. It’s like trying to build a skyscraper with the tools of a cabinet maker, precise, beautiful, but fundamentally wrong for the scale of the job.

Setting the stage for next-generation metaverse design trends

User expectations are set by cinematic games and stunning VFX. They expect immersive, seamless worlds that feel alive. A static environment, no matter how beautiful, breaks that illusion. When a world doesn't react to a user's presence, it feels like a diorama, not a destination.

The limitations are clear: static, manually created environments can't deliver the dynamic, scalable, and persistent experiences the metaverse requires. This isn't just about making things look better; it’s about building worlds that can grow and change.

To break free from this fidelity vs. framerate bind, we can't just work harder. We need to work smarter. This requires a fundamental shift in how we think about creating and rendering textures, moving from manual craft to intelligent systems. And that shift is already underway.

Trend 1: Smarter asset creation: AI and procedural generation

So, how do you build a world the size of a city without a team of a thousand artists? You teach the machines to handle the heavy lifting. The first major trend is about moving asset creation from a purely manual task to a collaborative process between artist and algorithm. This isn't about replacing artists; it's about augmenting them.

How are texturing technologies evolving to speed up workflows?

The answer lies in two key areas: AI-powered material generation and proceduralism. Think of these as powerful new brushes in your team’s toolkit.

AI-powered material generation: The latest crop of AI tools can generate entire PBR material sets from a simple text prompt. Imagine typing mossy cobblestone path with small puddles, photorealistic and getting a full set of albedo, roughness, normal, and displacement maps in seconds. This isn't a final product, but it's an incredible starting point. Instead of starting from a blank canvas, your artists start with a 90% solution that they can then refine and art-direct. It transforms the workflow from laborious creation to rapid iteration and creative polishing.

Procedural techniques: Proceduralism, using tools like Substance 3D Designer or Houdini, isn’t new, but its application in the metaverse is becoming essential. Instead of creating a single, static texture, artists design a system that can generate infinite variations. They define the rules, how cracks form, where moss grows, how dirt accumulates, and the system builds the texture. This is the only practical way to create vast, non-repeating environments. You don’t make one brick wall; you design a brick wall generator that can texture an entire city with unique, logical variations.

The practical impact on the metaverse environment design

For a creative director, the benefits here are direct and transformative for your metaverse environment design. You're not just getting more assets, you're fundamentally changing what your team is capable of achieving.

First, you reduce the time spent on repetitive tasks. Your senior artists, the ones with the keenest eyes, should not be spending their days creating tileable textures for floors and walls. Their talent is better spent defining the visual language and aesthetic rules for a procedural system. Let the machine generate the 50 variations, and let your artist make the final creative call.

Second, you empower smaller teams to create larger worlds. With AI and procedural workflows, a small, highly skilled team can generate the amount of content that would have previously required a massive outsourcing budget or an army of junior artists. This levels the playing field, allowing studios to focus their resources on creating unique, landmark assets and experiences, while the systems handle the environmental filler.

This approach shifts the artist's role from a digital painter to a digital botanist of sorts, they are designing the seeds from which entire worlds can grow. But once you've generated this massive world, how do you make it run?

Trend 2: High-fidelity performance: Advanced rendering techniques

Creating assets faster is only half the solution. If those assets still crash the system, you're back where you started. The second major trend tackles the performance side of the equation, enabling high-fidelity digital world rendering across a wide range of hardware, from high-end VR rigs to standalone headsets.

Optimizing digital world rendering for cross-platform experiences

The old paradigm of baked lighting and strict texture budgets is too rigid for the dynamic, cross-platform nature of the metaverse. The real breakthroughs are happening in how we handle lighting and texture data in real-time.

- The shift to real-time global illumination (GI): For years, realistic lighting meant pre-calculating, or baking, lightmaps, a time-consuming process that results in static shadows and reflections. Any change required a complete rebake. Modern engines are embracing real-time GI solutions (like Unreal Engine's Lumen). This means lighting is calculated on the fly, allowing for dynamic time-of-day, player-controlled lights, and interactive environments that respond to lighting changes instantly. It’s a move from a static movie set to a live, responsive world.

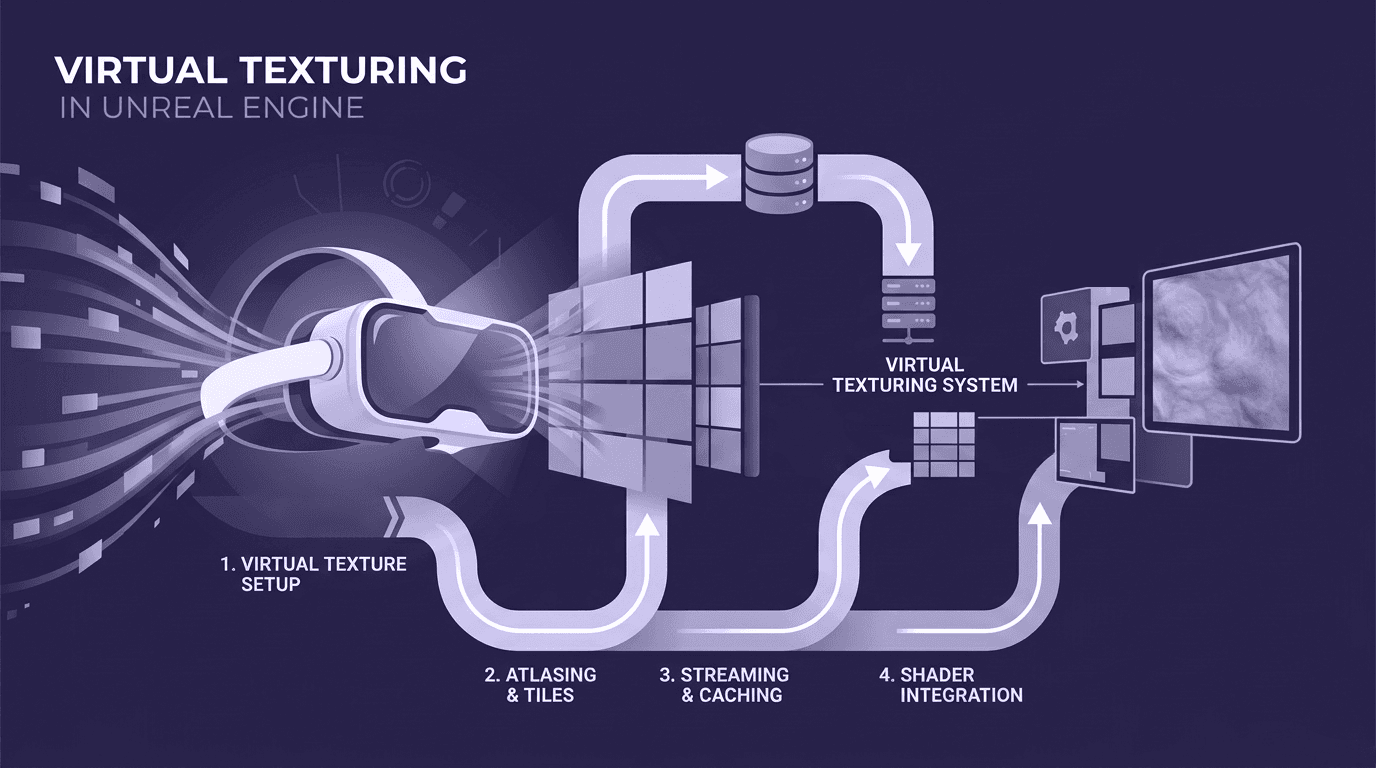

- Streaming for texture budgets: Texture memory has always been a hard limit, especially on mobile and standalone VR hardware. Texture streaming treats your texture data like Netflix treats video. Instead of loading every texture for a level into memory at once, the system streams in the highest-resolution versions only for the assets you are currently looking at. This effectively dismantles the old concept of a fixed texture budget, allowing for worlds that are far larger and more detailed than what could fit into a device's VRAM.

Key technological innovations in virtual environment design

Beyond lighting and streaming, fundamental changes in how game engines handle geometry and materials are unlocking new levels of detail.

Leveraging nanite-style virtualization: One of the most significant key technological innovations in virtual environment design is virtualized geometry systems, famously implemented as Nanite in Unreal Engine 5. Think of it as a pixel-level LOD (Level of Detail) system. It renders only the geometric detail that can actually be seen by the pixels on your screen. This means artists can import film-quality assets with millions of polygons directly into the engine without having to manually create multiple LODs or bake details down to a normal map. The performance cost is tied to what's on screen, not the complexity of the source asset. For texturing, this is liberating. You can now rely more on the actual geometry for fine details, knowing the system can handle it efficiently.

The role of advanced shaders: Shaders are evolving from simple material definitions to powerful, performant tools. Using node-based shader editors, technical artists can create incredibly complex and realistic materials, think iridescent coatings, multi-layered fabrics, or complex parallax effects, often with greater efficiency than using multiple texture maps. These shaders can also be built to be performant, creating stunning visuals that are cheaper to render than old, brute-force methods. It's about achieving more realism and complexity in the code, not just in the texture files.

With smarter asset creation and more advanced rendering, we can build bigger, more beautiful worlds. But the true frontier lies beyond visuals. It's in making those worlds feel real.

The real frontier: Texturing for multi-sensory interaction

Here’s the insight that most studios are still missing: the future of texturing isn't just about what you see. It's about creating textures that are responsive, data-driven, and even tactile. This is the leap from creating pretty pictures to building truly immersive digital environments that blur the line between the physical and the virtual.

Moving beyond visuals to build truly immersive digital environments

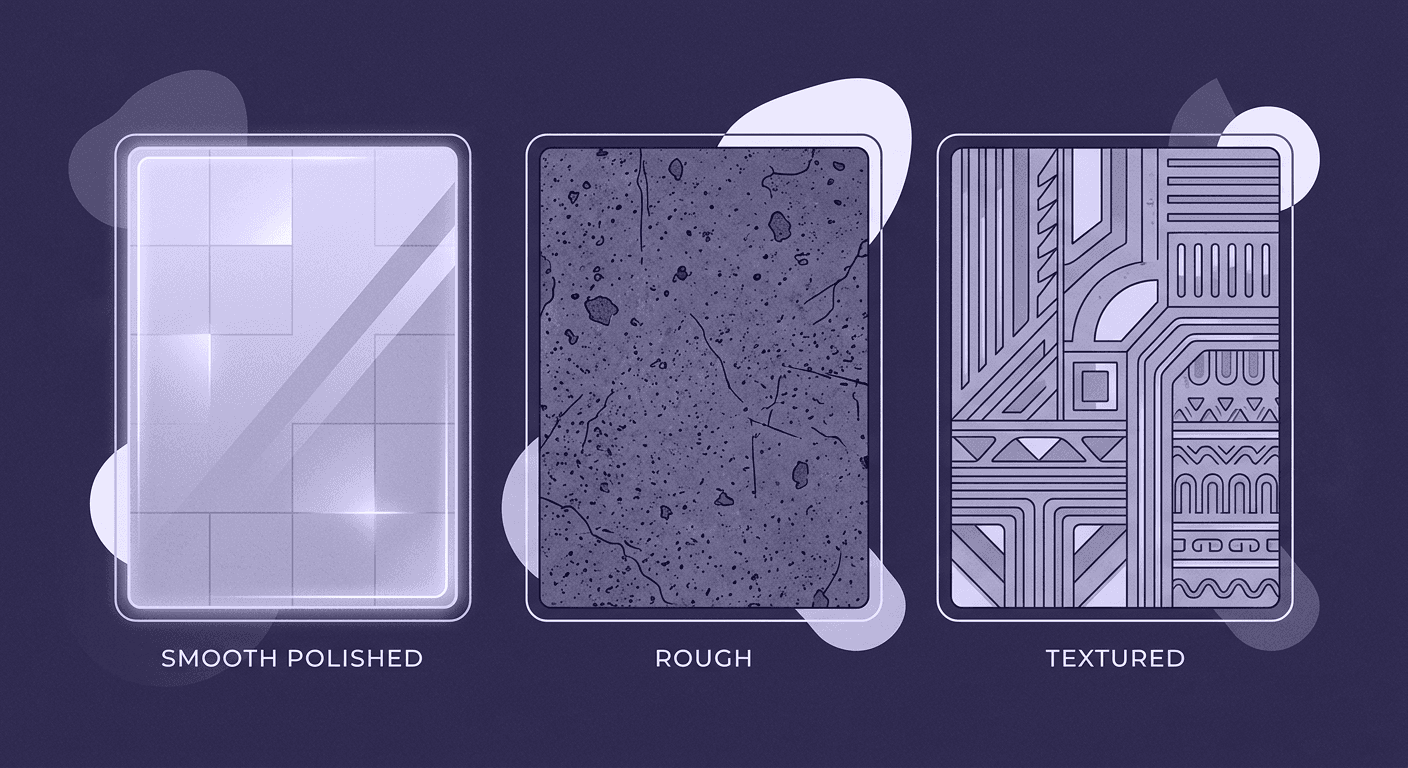

We need to start thinking of textures not as static images mapped to a surface, but as dynamic materials that can change and react. These materials are built on shaders and systems that respond to a variety of inputs.

What are dynamic materials? They are surfaces that tell a story over time. For example:

- Weather-reactive surfaces: A metal texture that begins to show rust after it rains in the virtual world. A stone path that becomes dark and glossy when wet, then slowly dries out in the sun.

- Proximity-based effects: A wall covered in bioluminescent fungi that glows brighter as a user gets closer. An ancient artifact that hums with energy when you reach out to touch it.

- Interaction-driven wear and tear: A grassy field where the grass becomes trampled and worn along the paths most players travel. A painted wall where the paint chips away when struck.

These aren't pre-baked animations. They are systemic, shader-driven effects that make the world feel persistent and responsive. They give the user a sense of presence and consequence, making the environment a character in itself, not just a backdrop.

The future of XR space technologies: Connecting textures to data

This concept of dynamic materials becomes even more powerful when we connect it to the broader evolution of XR space technologies. Textures can become a visualization layer for real-time data, turning abstract information into something tangible and interactive.

How digital twins feed real-world data: Consider the concept of a digital twin, a real-time virtual replica of a physical object or system. How do you visualize the operational status of a factory machine within its digital twin? You use its texture. A material can be programmed to shift from green to red based on live temperature data from its real-world counterpart. A pipe's texture could show the flow rate of liquid inside it. The texture is no longer just decorative; it's a data dashboard you can walk through.

Integrating haptic feedback for textures you can feel: The final piece of the puzzle is closing the sensory loop. As haptic technology (in gloves, bodysuits, and controllers) becomes more sophisticated, we can associate textures with specific haptic signals. When a user runs their hand over a virtual surface, the visual texture of rough bark can be paired with a high-frequency, gritty haptic response. A smooth, metallic surface would trigger a completely different feeling. This creates a powerful multi-sensory connection, making the user believe in the material reality of the virtual object.

This is the real future. Not just looking at a world, but feeling it, and seeing it live and breathe around you. So, how do you start preparing your studio for this future today?

Moving into practice: A roadmap for your studio's pipeline

This all sounds exciting, but theory doesn't ship products. Let's ground these ideas in a practical roadmap you can start discussing with your team. The goal is to evolve your pipeline, not rip it out and replace it. It's about making deliberate, forward-looking choices.

How to integrate future trends in metaverse texturing today

Integrating the future trends in metaverse texturing starts with asking the right questions. During your next pipeline review or project kickoff, bring these to your art and engineering leads:

Where are our biggest time-sinks? Is it UV unwrapping? Baking normals? Creating texture variations? Identify the most painful, repetitive task in your current workflow. That’s your first candidate for an AI or procedural solution.

What could we do if asset creation were 10x faster? This isn't about working 10x harder. It's a thought experiment. Would you build bigger worlds? More detailed props? Spend more time on interaction design? The answer helps define your studio’s priorities.

Are we building static dioramas or living worlds? Discuss adding one small, dynamic material to your next project. It could be as simple as puddles that dry after a rain shower. Start small to build experience with shader-based, reactive surfaces.

Once you've identified the opportunities, focus on upskilling. This doesn't necessarily mean hiring new people; it means investing in your current talent:

- Skill up in proceduralism: Encourage one or two of your artists to go deep on a tool like Substance 3D Designer or Houdini. Their role will be to build a library of powerful, flexible materials for the rest of the team to use.

- Embrace technical art: The bridge between art and engineering is more critical than ever. Invest in your technical artists. They are the ones who will build the dynamic shaders, connect textures to game logic, and ensure these new techniques run efficiently.

- Learn to pilot the AI: Treat AI image generators as powerful interns. Teach your artists how to write effective prompts and how to quickly iterate on AI-generated assets, using them as a base for high-quality, art-directed final textures.

From asset creators to experience architects: Shifting the team mindset

The most important shift is one of perspective. Your team members are not just creating 3D models or PBR textures. They are architects of a user's experience. This reframing is crucial.

The discussion should evolve from "Is this texture photorealistic?" to "How does this texture enhance the user's journey?" A worn path isn't just a texture; it's a subtle signpost guiding the player. A flickering, unstable material isn't just a visual effect; it's a narrative tool that builds tension.

Balancing immediate project needs with this kind of long-term innovation is the core challenge of any leadership role. But by starting small, asking the right questions, and empowering your team with new skills, you can begin to build a pipeline that’s not just efficient, but future-proof. You'll be ready to move beyond just building worlds and start bringing them to life.

Winning the war by changing the rules

For years, that tug-of-war between fidelity and framerate has defined our work. We pulled, we compromised, and we shipped experiences that were often a shadow of our original vision. It felt like a battle of trade-offs you could never truly win.

But the trends we’ve walked through, smarter asset creation, advanced rendering, and interactive surfaces aren’t just new ropes for the game. They’re a way to call the game off entirely. These tools don't just help you manage limitations; they work together to dismantle them.

This shift frees you and your team from the grind of being asset accountants and performance police. It lets you become what you were always meant to be: experienced architects.

The most important question is no longer, “What will this cost us in performance?” but rather, “What story should this surface tell?” It’s about designing worlds that react, that remember, and that feel alive to the touch. You’ve got the vision. Now, you finally have a toolkit that can keep up.

Mira Kapoor

Mira leads marketing at Texturly, combining creative intuition with data-savvy strategy. With a background in design and a decade of experience shaping stories for creative tech brands, Mira brings the perfect blend of strategy and soul to every campaign. She believes great marketing isn’t about selling—it’s about sparking curiosity and building community.

Latest Blogs

A Practical Guide for How to Choose The Right Concrete Texture fo...

3D textures

PBR textures

Mira Kapoor

Apr 29, 2026

VR Textures in Unreal Engine: A Beginner’s Guide to Setup, Optimi...

3D textures

AI in 3D design

Max Calder

Apr 27, 2026

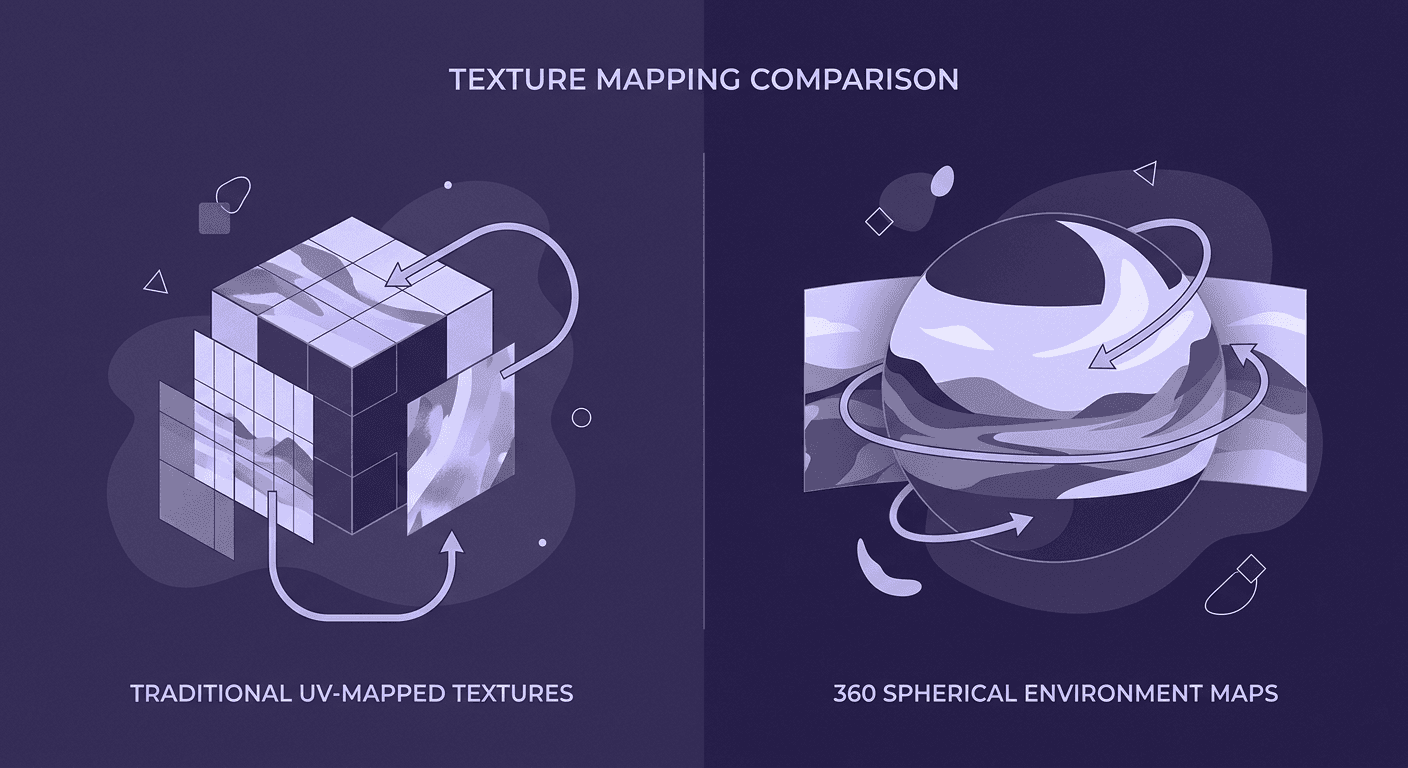

360 Environment Textures vs Traditional Textures: Key Differences...

AI in 3D design

PBR mapping

Max Calder

Apr 24, 2026